Leif P. Heaney

AI/ML & Systems Engineer

Building Intelligent Systems

AI/ML & Systems Engineer designing and shipping production AI applications, model-based system architectures, and intelligent automation that transforms complex data into confident decisions.

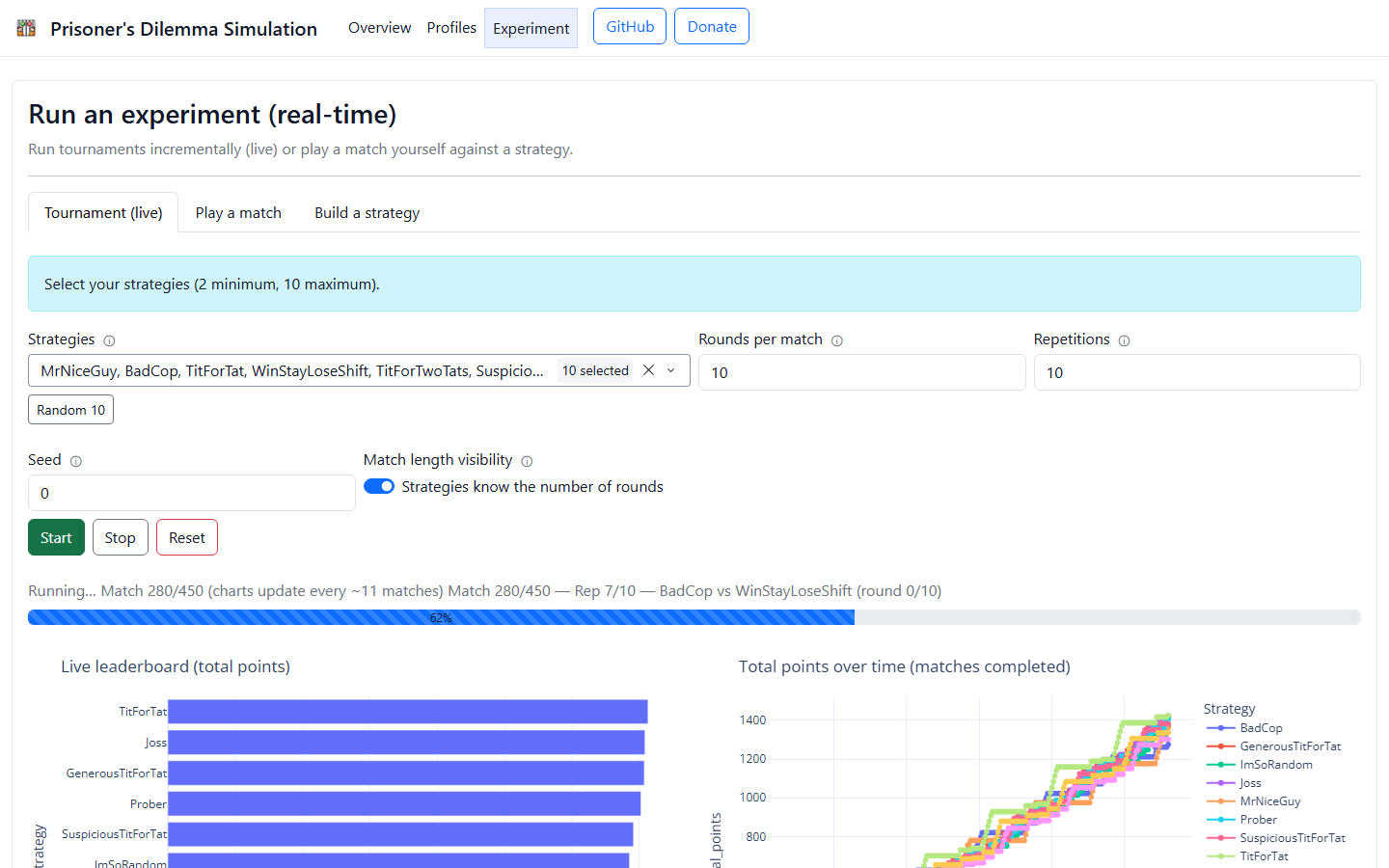

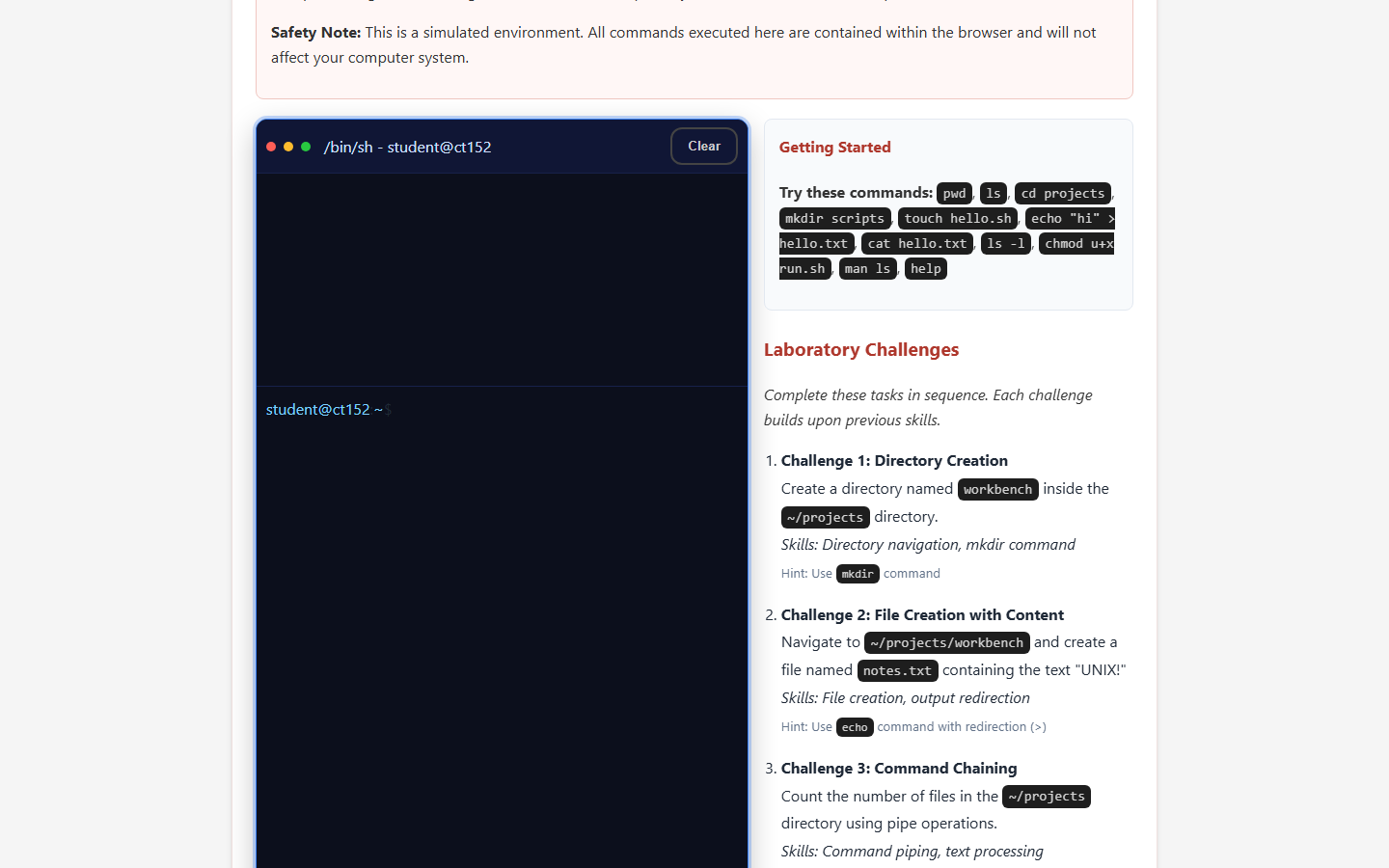

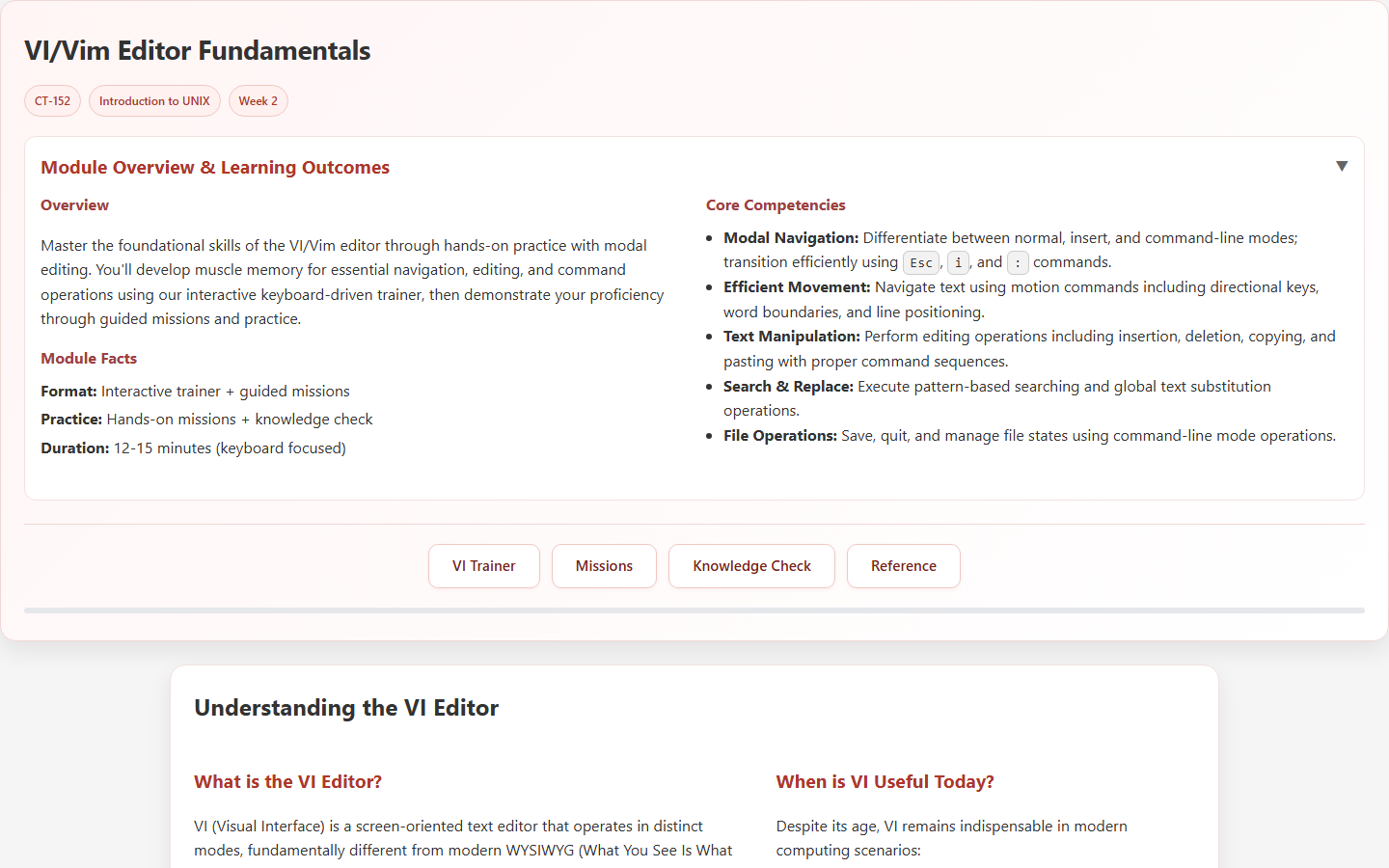

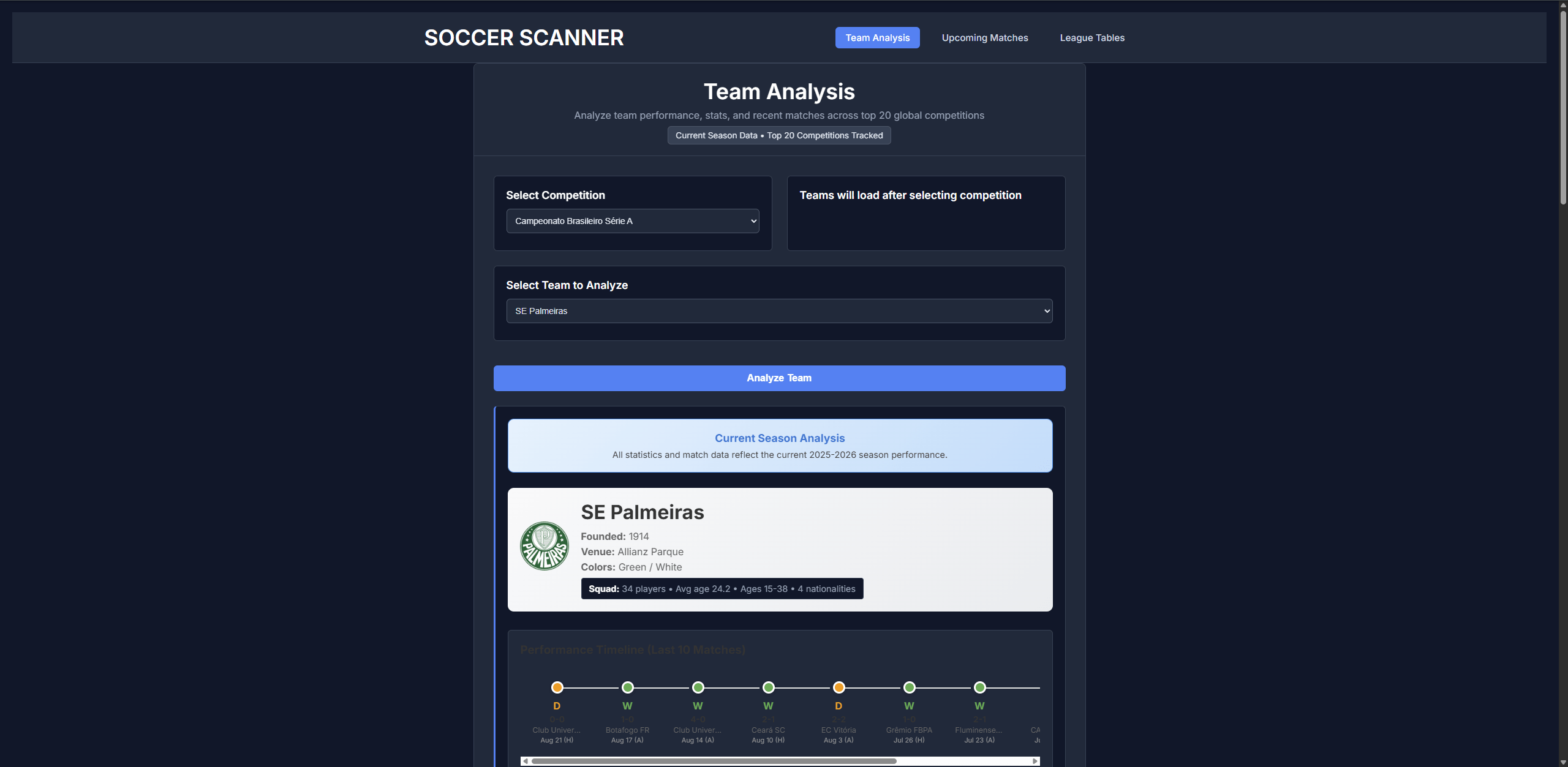

Featured Work

Selected projects and practical experiments.

Writing

Posts, demos, and presentation-driven notes.

Explore

Secondary site sections and supporting resources.

Artificial Intelligence

Imported from the previous site and preserved as a standalone section.

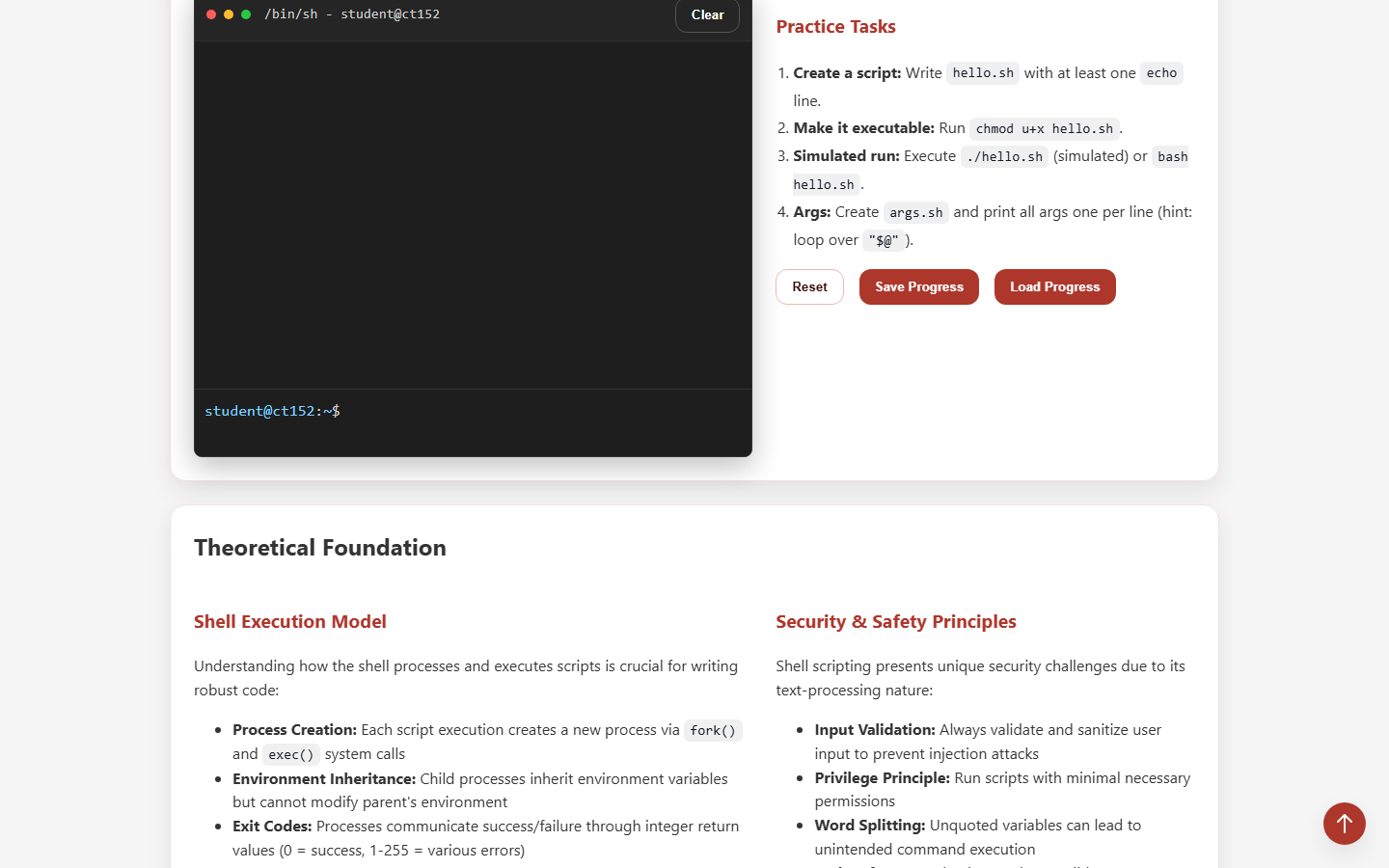

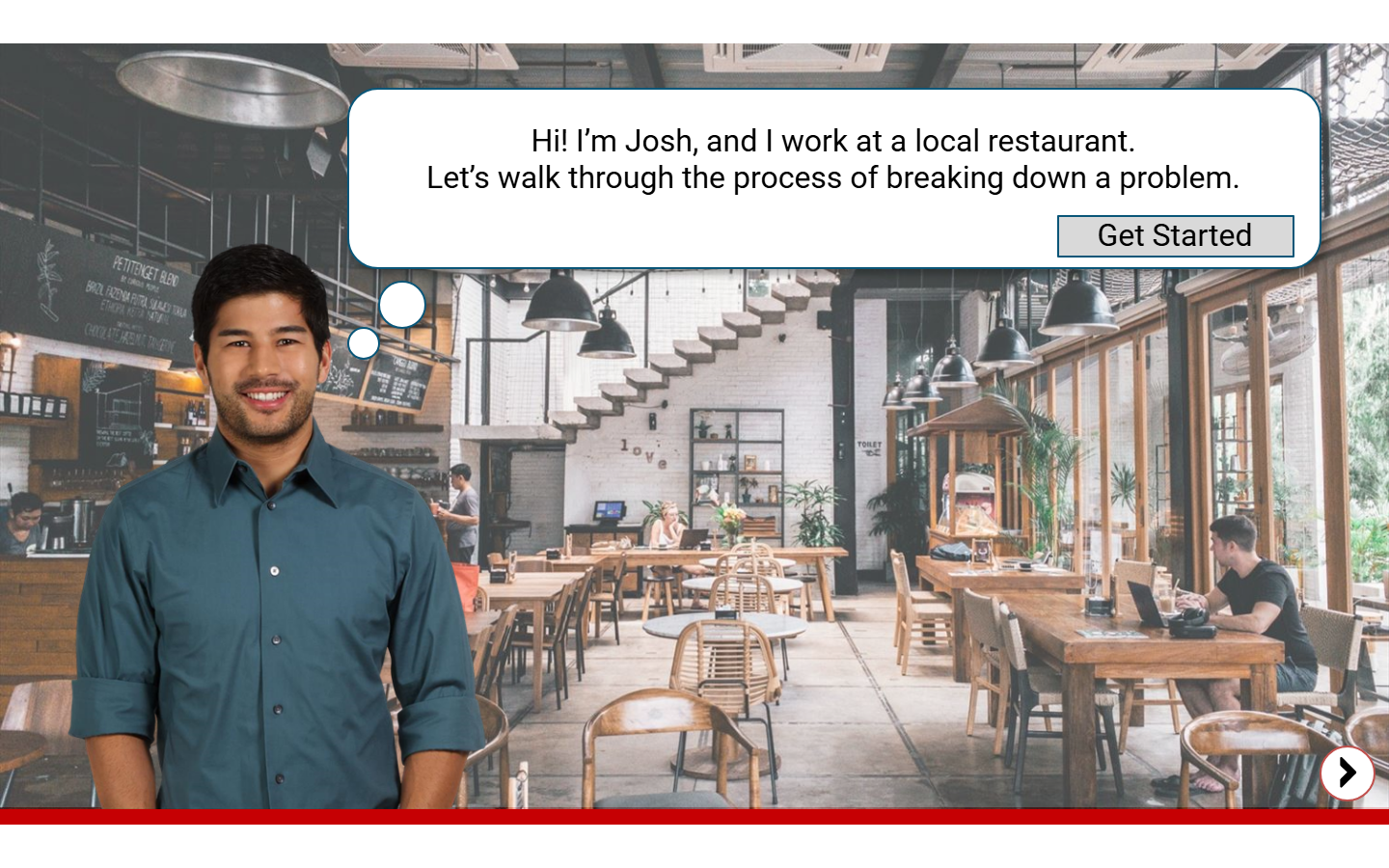

Instructional Design

Imported from the previous site and preserved as a standalone section.

Learning Archive

Imported from the previous site and preserved as a standalone section.

GitHub

Imported from the previous site and preserved as a standalone section.